- As astronauts and rovers explore uncharted worlds, finding new ways of navigating these bodies is essential in the absence of traditional navigation systems like GPS.

- Optical navigation relying on data from cameras and other sensors can help spacecraft - and in some cases, astronauts themselves - find their way in areas that would be difficult to navigate with the naked eye.

- Three NASA researchers are pushing optical navigation tech further, by making cutting edge advancements in 3D environment modeling, navigation using photography, and deep learning image analysis.

In a dim, barren landscape like the surface of the Moon, it can be easy to get lost. With few discernable landmarks to navigate with the naked eye, astronauts and rovers must rely on other means to plot a course.

As NASA pursues its Moon to Mars missions, encompassing exploration of the lunar surface and the first steps on the Red Planet, finding novel and efficient ways of navigating these new terrains will be essential. That's where optical navigation comes in - a technology that helps map out new areas using sensor data.

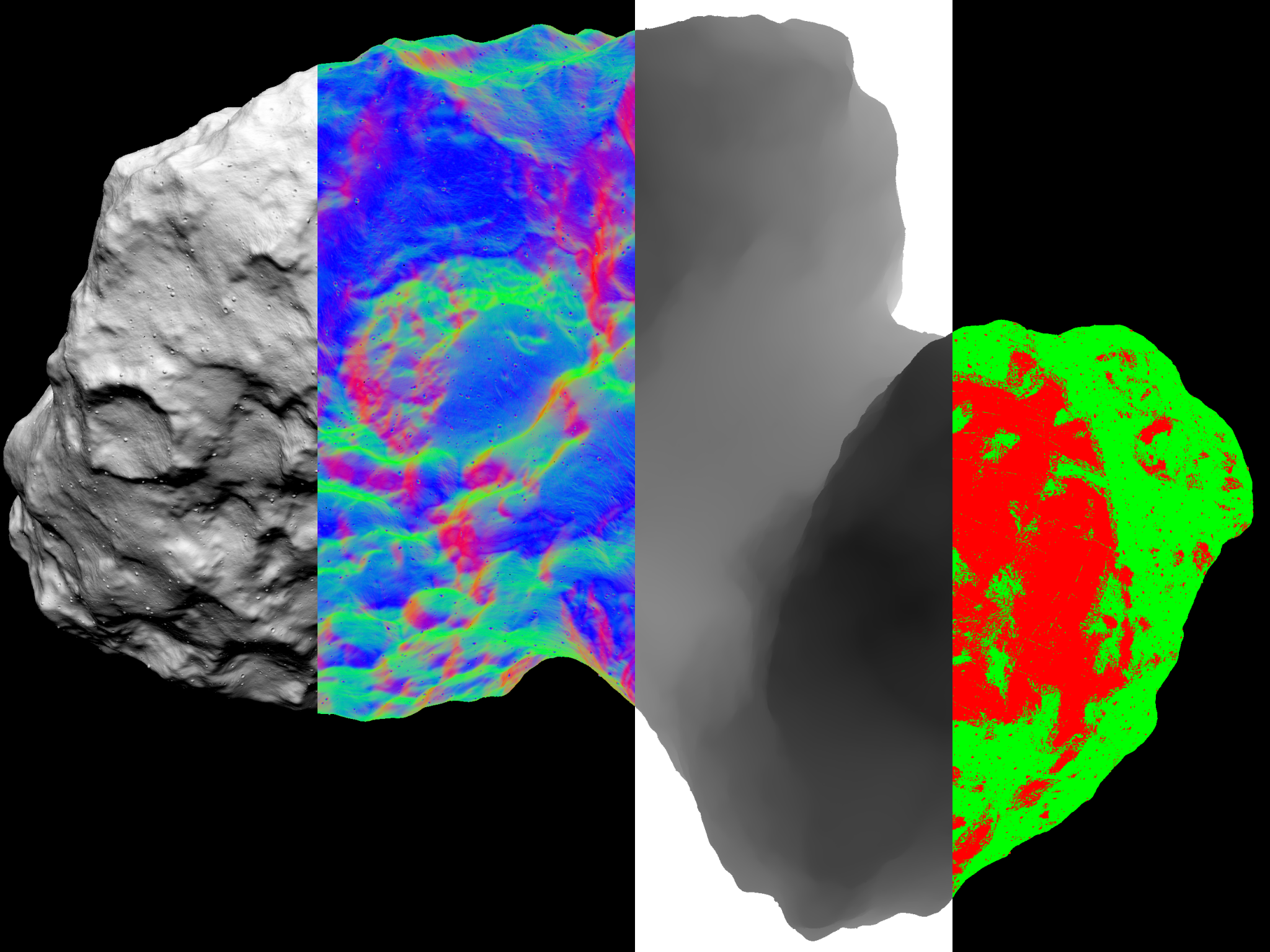

NASA's Goddard Space Flight Center in Greenbelt, Maryland, is a leading developer of optical navigation technology. For example, GIANT (the Goddard Image Analysis and Navigation Tool) helped guide the OSIRIS-REx mission to a safe sample collection at asteroid Bennu by generating 3D maps of the surface and calculating precise distances to targets.

Now, three research teams at Goddard are pushing optical navigation technology even further.

Virtual World Development

Chris Gnam, an intern at NASA Goddard, leads development on a modeling engine called Vira that already renders large, 3D environments about 100 times faster than GIANT. These digital environments can be used to evaluate potential landing areas, simulate solar radiation, and more.

While consumer-grade graphics engines, like those used for video game development, quickly render large environments, most cannot provide the detail necessary for scientific analysis. For scientists planning a planetary landing, every detail is critical.

"Vira combines the speed and efficiency of consumer graphics modelers with the scientific accuracy of GIANT," Gnam said. "This tool will allow scientists to quickly model complex environments like planetary surfaces."

The Vira modeling engine is being used to assist with the development of LuNaMaps (Lunar Navigation Maps). This project seeks to improve the quality of maps of the lunar South Pole region which are a key exploration target of NASA's Artemis missions.

Vira also uses ray tracing to model how light will behave in a simulated environment. While ray tracing is often used in video game development, Vira utilizes it to model solar radiation pressure, which refers to changes in momentum to a spacecraft caused by sunlight.

Find Your Way with a Photo

Another team at Goddard is developing a tool to enable navigation based on images of the horizon. Andrew Liounis, an optical navigation product design lead, leads the team, working alongside NASA Interns Andrew Tennenbaum and Will Driessen, as well as Alvin Yew, the gas processing lead for NASA's DAVINCI mission.

An astronaut or rover using this algorithm could take one picture of the horizon, which the program would compare to a map of the explored area. The algorithm would then output the estimated location of where the photo was taken.

Using one photo, the algorithm can output with accuracy around hundreds of feet. Current work is attempting to prove that using two or more pictures, the algorithm can pinpoint the location with accuracy around tens of feet.

"We take the data points from the image and compare them to the data points on a map of the area," Liounis explained. "It's almost like how GPS uses triangulation, but instead of having multiple observers to triangulate one object, you have multiple observations from a single observer, so we're figuring out where the lines of sight intersect."

This type of technology could be useful for lunar exploration, where it is difficult to rely on GPS signals for location determination.

A Visual Perception Algorithm to Detect Craters

To automate optical navigation and visual perception processes, Goddard intern Timothy Chase is developing a programming tool called GAVIN (Goddard AI Verification and Integration) Tool Suit.

This tool helps build deep learning models, a type of machine learning algorithm that is trained to process inputs like a human brain. In addition to developing the tool itself, Chase and his team are building a deep learning algorithm using GAVIN that will identify craters in poorly lit areas, such as the Moon.

"As we're developing GAVIN, we want to test it out," Chase explained. "This model that will identify craters in low-light bodies will not only help us learn how to improve GAVIN, but it will also prove useful for missions like Artemis, which will see astronauts exploring the Moon's south pole region - a dark area with large craters - for the first time."

As NASA continues to explore previously uncharted areas of our solar system, technologies like these could help make planetary exploration at least a little bit simpler. Whether by developing detailed 3D maps of new worlds, navigating with photos, or building deep learning algorithms, the work of these teams could bring the ease of Earth navigation to new worlds.