Recently, student LIU Luolin from the Xi'an Institute of Optics and Precision Mechanics (XIOPM) of the Chinese Academy of Sciences (CAS) proposed a two-stream end-to-end model named TSFNet for thermal and visible image fusion. The results were published in Neurocomputing.

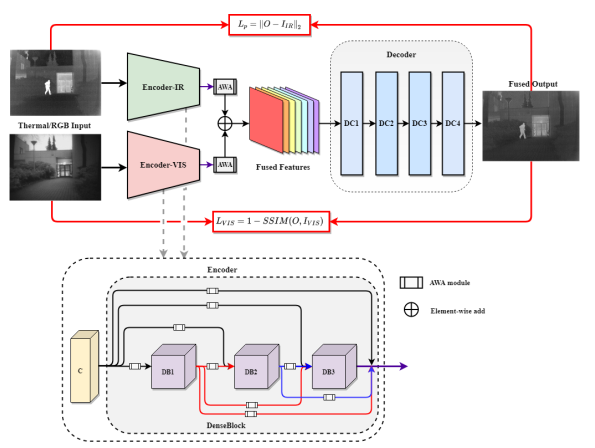

The TSFNet, using two branches for feature learning, is quite different from previous two-stream methods, and it can fully capture the information from the both sources.

Thermal images are insensitive to brightness and can distinguish objects and background by differentiating thermal radiation. Visible images can understand human vision more intuitively and have a higher resolution. Therefore, it can be inferred that the fusion of the two may yield a new image with clear objects and high resolution for all-weather and all-day/night monitoring.

In this study, in order to enable the model to retain the detailed information of the source image autonomously during the fusion, LIU and his team members adopted an adaptive weight allocation strategy to guide feature selection. The whole framework was disassembled into three modules, feature extraction, fusion, and reconstruction.

According to the experiments results, TSFNet outperforms state-of-the-art methods under different evaluation metrics. In the future, it will provide a guides for designing new network of image fusion.